- cross-posted to:

- technology@lemmy.ml

- cross-posted to:

- technology@lemmy.ml

You must log in or register to comment.

This looks sweet! I’m gonna give it a try after work

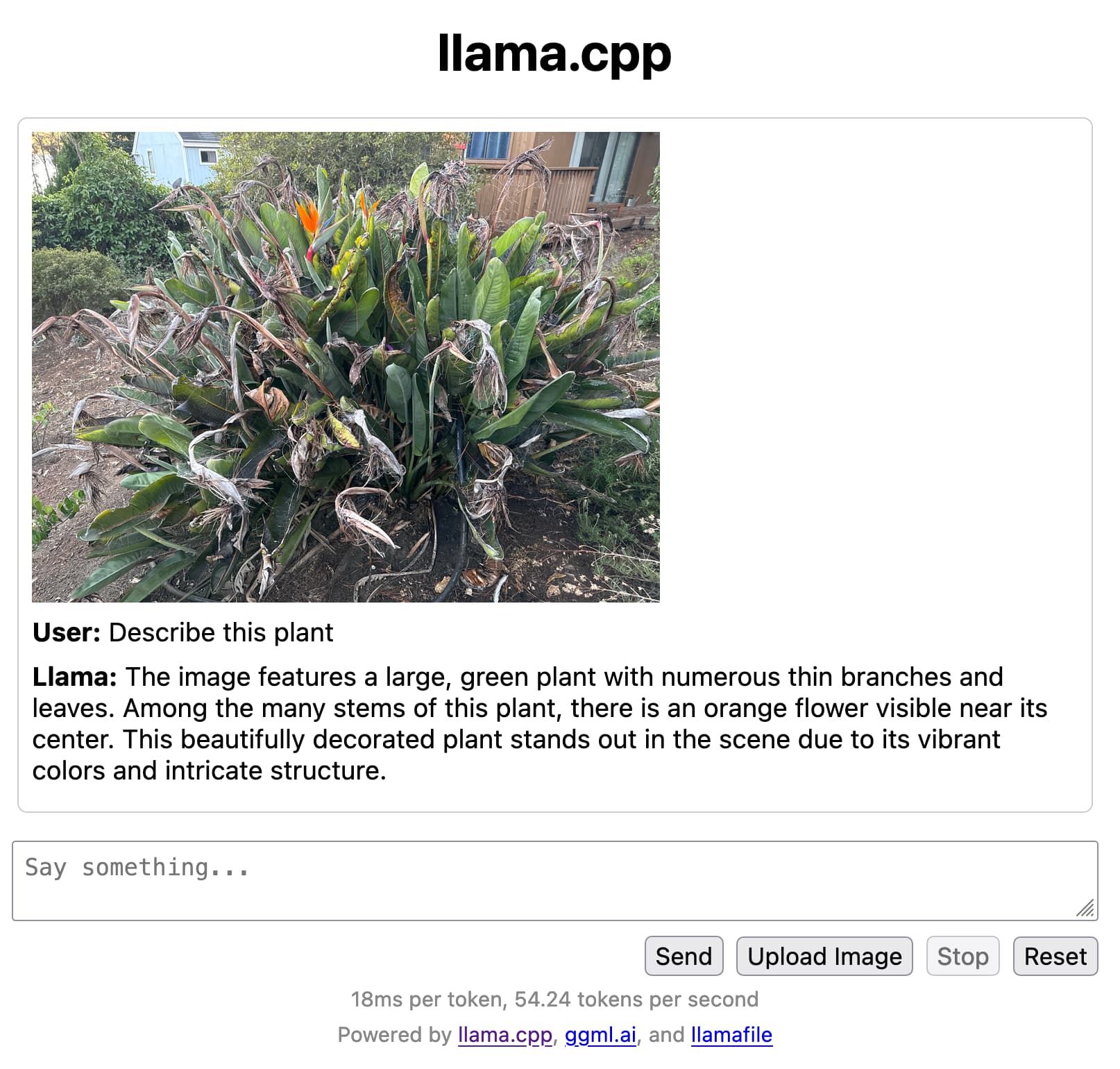

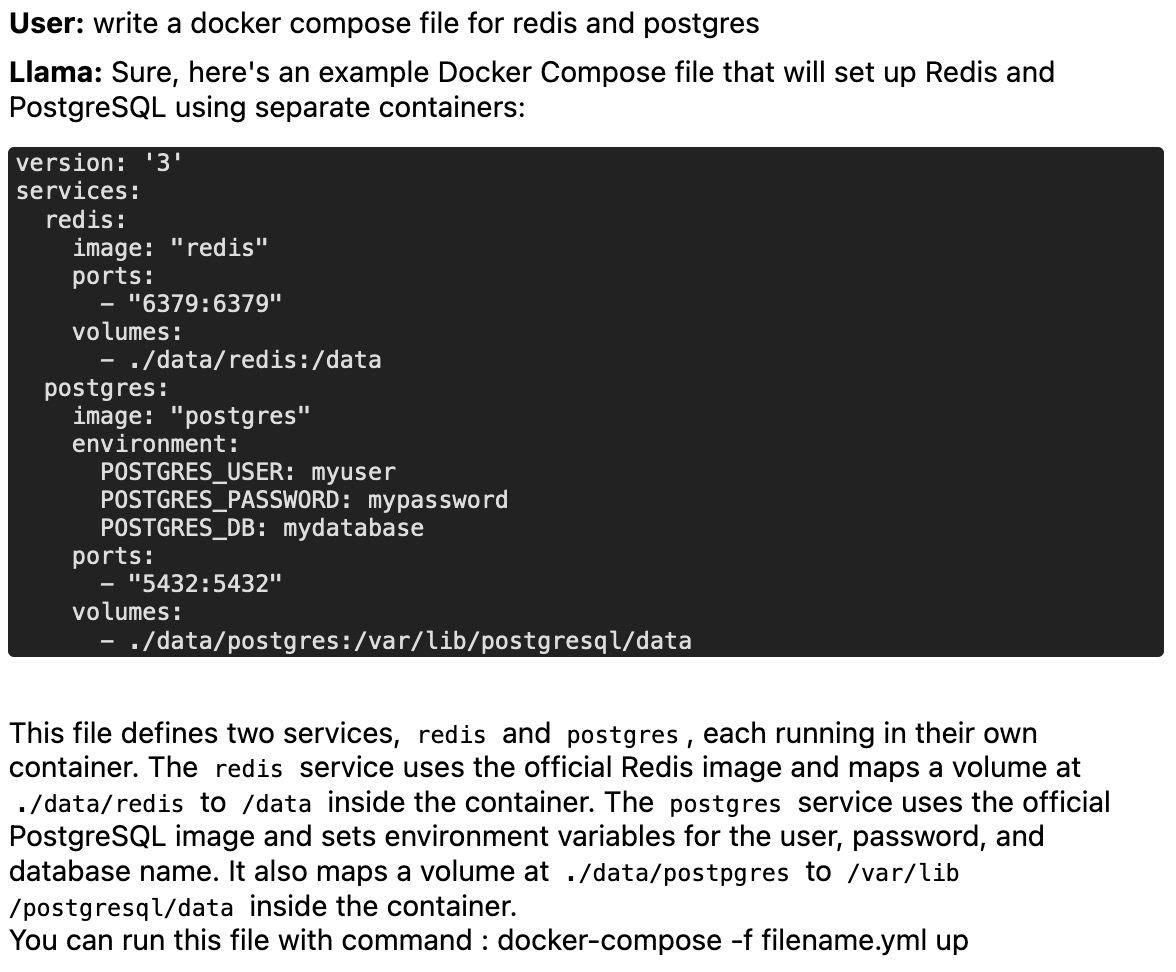

it’s pretty neat, and it’s actually kinda handy as a personal stack overflow, for example you can ask questions like this and get useful results

What kind of specs do you need to run one of these?

Seems like 4gb of RAM is enough for the smaller models, and then it’s just a difference of how fast it generates the responses based on how good the CPU is.

the url in the article looks dead, but here’s one with a working llamafile https://huggingface.co/jartine/llava-v1.5-7B-GGUF/tree/main