Why 10, 20, 30, 40, 50 instead of 1, 2, 3, 4, 5?

- 1 Post

- 106 Comments

Not if you’re mainly interested in counting multiples of pi.

“A base is usually a whole number bigger than 1, although non-integer bases are also mathematically possible.”

I don’t think there’s any technical reason we can’t count in base pi

I think someone else said what it actually is in another comment. It’s functionally identical 90℅ of the time for me anyway,and I use CLI and vim on it.

If it’s a private repo I don’t worry too much about forking. Ideally branches should be getting cleaned up as they get merged anyway. I don’t see a great advantage in every developer having a fork rather than just having feature/bug branches that PR for merging to main, and honestly it makes it a bit painful to cherry-pick patches from other dev branches.

Everywhere I’ve worked, you have a Windows/Mac for emails, and then either use WSL, develop on console in Mac since it’s Linux, or most commonly have a dedicated Linux box or workstation.

I’m starting to see people using VSCode more these days though.

1·4 months ago

1·4 months agoThe Android TV app isn’t great either. I just cast to the TV from the mobile app, which is still slightly buggy but generally works fine.

2·4 months ago

2·4 months agoI didn’t realize just how siloed my perspective may be haha, I appreciate the statistics. I’ll agree that cyber security is a concern in general, and honestly everyone I know in industry has at least a moderate knowledge of basic cyber security concepts. Even in embedded, processes are evolving for safety critical code.

31·4 months ago

31·4 months ago… You know not all development is Internet connected right? I’m in embedded, so maybe it’s a bit of a siloed perspective, but most of our programs aren’t exposed to any realistic attack surfaces. Even with IoT stuff, it’s not like you need to harden your motor drivers or sensor drivers. The parts that are exposed to the network or other surfaces do need to be hardened, but I’d say 90+% of the people I’ve worked with have never had to worry about that.

Caveat on my own example, motor drivers should not allow self damaging behavior, but that’s more of setting API or internal limits as a normal part of software design to protect from mistakes, not attacks.

4·4 months ago

4·4 months agoMy take was that they’re talking more about a script kiddy mindset?

I love designing good software architecture, and like you said, my object diagrams should be simple and clear to implement, and work as long as they’re implemented correctly.

But you still need knowledge of what’s going on inside those objects to design the architecture in the first place. Each of those bricks is custom made by us to suit the needs of the current project, and the way they come together needs to make sense mathematically to avoid performance pitfalls.

10·4 months ago

10·4 months agoI was gonna say, the OP here sounds perfectly good at computers. Most people either have so little knowledge they can’t even start on solving their printer problem no matter what, or don’t have the problem solving mindset needed to search for and try different things until they find the actual solution.

There’s a reason why specific knowledge beyond the basic concepts is rarely a hard requirement in software. The learning and problem solving abilities are way more important.

7·4 months ago

7·4 months agoI greatly enjoyed the movie, especially how real it felt as a goodbye from Miyazaki. But I do think it was a bit disjointed, and the first half of the plot wasn’t as well done.

7·4 months ago

7·4 months agoI assumed they misunderstood how a 4 1/2 year master’s program works, since the masters part is technically only half a year on paper. But I don’t think that necessarily makes sense in context…

15·5 months ago

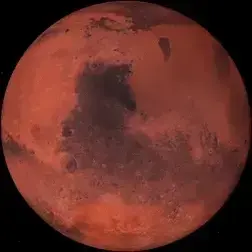

15·5 months agoA quick search says Phobos orbits 3700 miles, aka 6000km, above the surface of Mars. A few thousand of either is in fact, correct.

10·6 months ago

10·6 months agoIt’s been published by multiple sources at this point that this happens because of detected proximity. Basically, they know who you hang out with based on where your phones are, and they know the entire search history of everyone you interact with. Based on this, they can build models to detect how likely you are to be interested in something your friend has looked at before.

9·6 months ago

9·6 months agoThe way that “Hey Alexa” or “Hey Google” works is by, like you said, constantly analysing the sounds they said. However, this is only analyzed locally for the specific phrase, and is stored in a circular buffer of a few seconds so it can keep your whole request in memory. If the phrase is not detected, the buffer is constantly overwritten, and nothing is sent to the server. If the phrase is detected, then the whole request is sent to the server where more advanced voice recognition can be done.

You can very easily monitor the traffic from your smart speaker to see if this is true. So far I’ve seen no evidence that this is no longer the common practice, though I’ll admit to not reading the article, so maybe this has changed recently.

Look up how HLS (HTTP Live Streaming) works. They just need to generate a personalized playlist for each person which points at things already hosted on CDN, and insert the ads where they want in the literal text file that your video player reads from to serve you the video.

I don’t know much about it, but it looks like there’s specific tags designed for dynamic ad insertion. Idk if YouTube plans to use them in this case though, if they want it to be undetectable to the client.