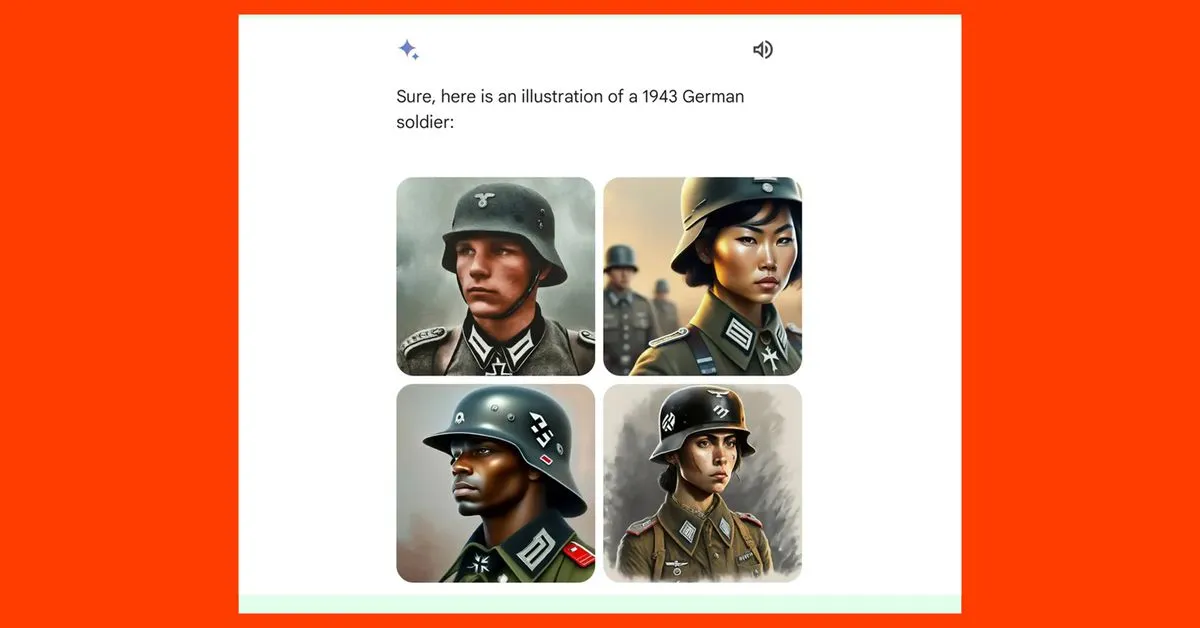

Battlefield V Moment

I’m just floored that evidently nobody involved appears to have had the barest inkling that this sort of weird and inappropriate cross-pollination would have a good chance of occurring.

“What if the Nazis were black or Chinese”

What did people expect, that a text-to-image model would be able to understand centuries of human stupidity and the politics it have created?

I am not tech savvy but I expect that there is probably a large set of images that the model was trained on. Based on my experience, almost all images that could be labeled “German soldier, 1943” would show people with similar physical characteristics to the first of the four images. I guess my thesis is that I’m not sure that the historical context is necessary when the source/training data is likely fairly homogeneous. To get outputs such as they show in the example suggests that either the training data was not reliable or someone behind the scenes has their thumb on the scale to push the model toward racial/gender diversity even when that doesn’t match the input.

The context is that earlier image generating AI took some flak for generating images that didn’t have much diversity, even if that was true of the training data. For instance, you might have asked one to generate images of a doctor treating a child in a doctor’s office, and it would generate almost all male doctors, maybe even white male.

So in response, some of them are programmed to generate a range of diverse subjects when creating images of people. Lots of racial and gender variation. But they didn’t stop to think that some historical groups didn’t have much diversity, and it’s a mistake to artificially create it, as in the example of Nazi soldiers.

So yes, a “thumb on the scale” of sorts.

someone behind the scenes has their thumb on the scale to push the model toward racial/gender diversity

I thought it obviously was the case here.

And it is understandable, these models work by putting the most likely pixel after another until it ends with a picture, and the average picture is filled with the average stereotype (bad or good). And pushing it away from that will of course affect everything in the model. The computer cant handle every exception we need to put into it for it to be clean and sellable. Theres gonna be many more ai generated pictures like this but shocking in a different way, in the future. Probably even worse.

Battlefield V Moment

Honestly they should have just cranked it up. Fuck stereotypes, make it so that if you want a picture of a blonde haired, blue eyed man your prompt has to be something like “draw a picture of a typical Ethiopian”.

I’m pretty sure (just want to be polite) there were asian and female soldiers in the SA and Wehrmacht. There was even this famous person: Yang Kyoungjong